Teaching AI to "see" websites like we do made it much more capable

By using images rather than HTML, Tencent's AI can now complete the majority of its tasks on Google, Amazon, and Wikipedia

LLMs allow the development of chatbots and other AI applications that can understand natural language instructions. However, most existing chatbots and virtual assistants have very limited abilities when it comes to actually finding information online. They typically cannot browse websites independently in order to locate facts or answers to questions.

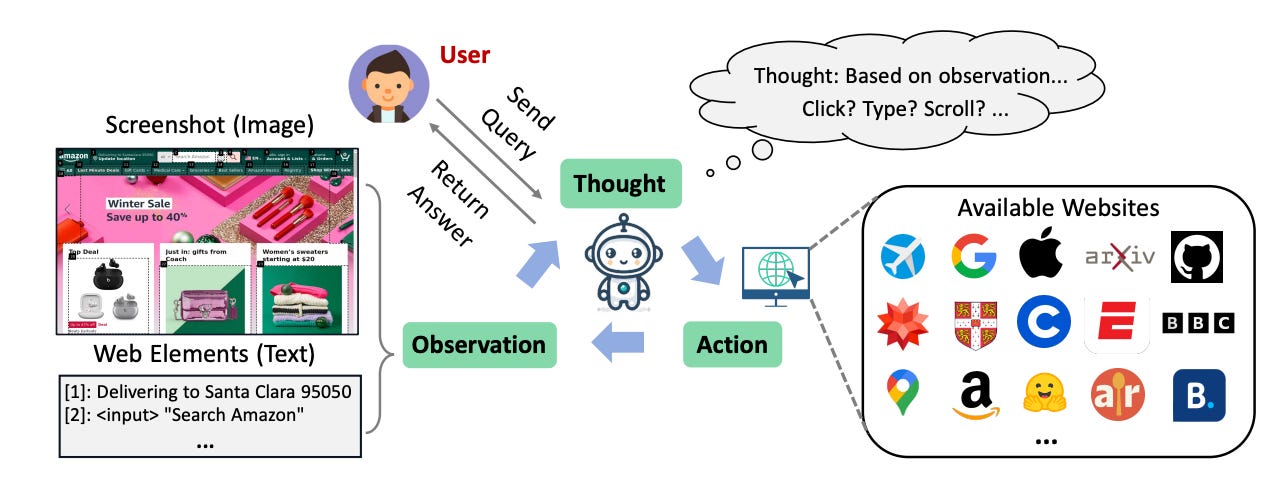

New research from scientists at Tencent AI Lab explores training sophisticated AI agents that can navigate the web autonomously, similarly to how humans browse and search for information. This work specifically focuses on developing an LLM-powered intelligent agent dubbed WebVoyager (no, not that Voyager), which leverages both textual and visual inputs to interact with web browsers and complete user instructions by extracting information from real-world websites. The study demonstrates promising results, with WebVoyager successfully completing over 55% of complex web tasks spanning popular sites like Google, Amazon, and Wikipedia.

Equipping AI systems with more human-like web browsing abilities could enable the next generation of capable and useful virtual assistants. Rather than just responding based on limited knowledge, they could independently look up answers online just as a person would. This research represents an important step toward that goal.

The Challenge of Browsing the Web

Keep reading with a 7-day free trial

Subscribe to AIModels.fyi to keep reading this post and get 7 days of free access to the full post archives.