PubDef: Defending Against Transfer Attacks Without Hurting Performance

Adversarial attacks pose a serious threat to ML models. But most proposed defenses hurt performance on clean data too much to be practical.

Adversarial attacks pose a serious threat to the reliability and security of machine learning systems. By making small perturbations to inputs, attackers can cause models to produce completely incorrect outputs. Defending against these attacks is an active area of research, but most proposed defenses have major drawbacks.

This paper (repo here) from researchers at UC Berkeley introduces a new defense called PubDef that makes some progress on this issue. PubDef achieves much higher robustness against a realistic class of attacks while maintaining accuracy on clean inputs. This post explains the context of the research, how PubDef works, its results, and its limitations. Let's go.

Subscribe or follow me on Twitter for more content like this!

The Adversarial Threat Landscape

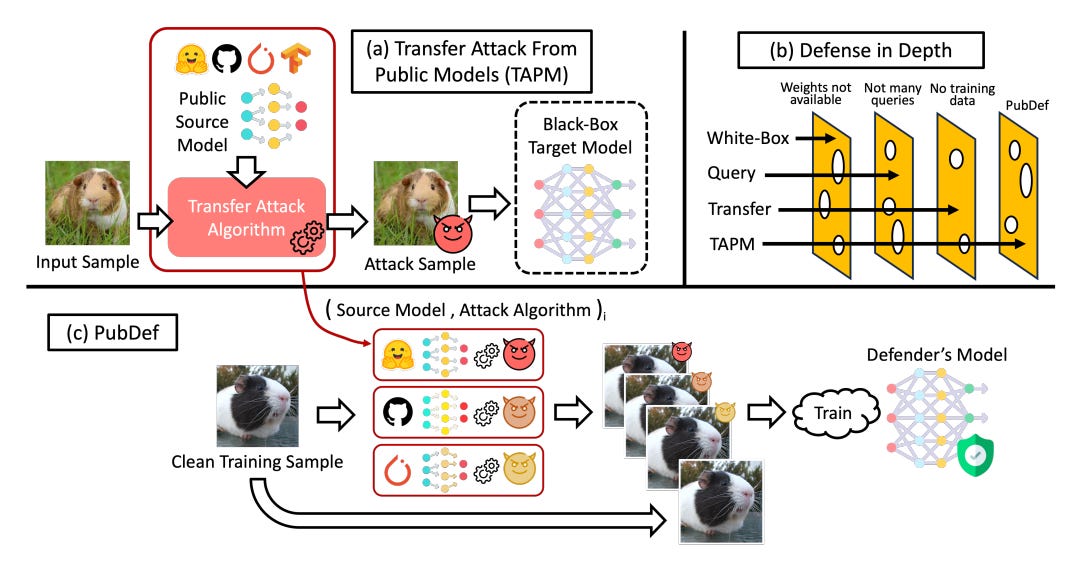

Many types of adversarial attacks have been studied. The most common is white-box attacks. Here the adversary has full access to the model's parameters and architecture. This lets them compute gradients to precisely craft inputs that cause misclassifications. Defenses like adversarial training have been proposed, but they degrade performance on clean inputs too much.

Transfer attacks are more realistic. The attacker uses an accessible surrogate model to craft adversarial examples. They hope these transfer and also fool the victim model. Transfer attacks are easy to execute and don't require any access to the victim model.

Query-based attacks make repeated queries to the model to infer its decision boundaries. Defenses exist to detect and limit these attacks by monitoring usage.

Overall, transfer attacks are very plausible in practice but are not addressed by typical defenses like adversarial training or systems that limit queries.

Keep reading with a 7-day free trial

Subscribe to AIModels.fyi to keep reading this post and get 7 days of free access to the full post archives.