HyperFields: towards zero-shot NeRFs by mapping language to 3D geometry

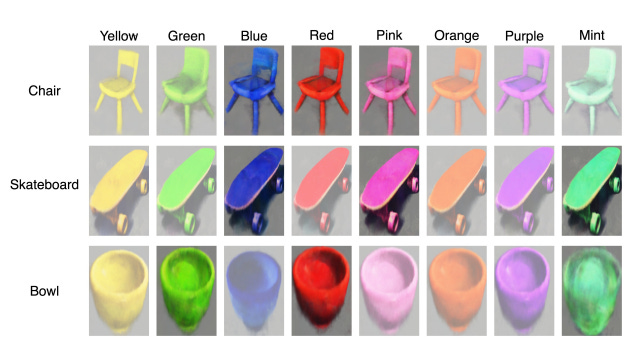

Training AI to generate 3D objects from text descriptions

Recent breakthroughs in natural language processing have enabled AI systems to generate 2D images from text prompts with striking realism and detail. But extending these capabilities to 3D content creation remains extremely challenging. A new technique called HyperFields demonstrates promising progress towards flexible, efficient 3D geometry generation directly from language descriptions.

Subscribe or follow me on Twitter for more content like this!

The Broader Context

Photorealistic image generation from text prompts has rapidly advanced thanks to diffusion models like DALL-E 2 and stable diffusion. These leverage enormous datasets of image-text pairs to learn mappings between language and 2D image distributions.

However, far less 3D data is available compared to 2D images. And modeling the complexities of real-world 3D geometry is fundamentally more difficult than 2D images. As a result, text-to-3D generation remains a major unsolved problem in AI research despite intense interest.

Solving this challenge could enable creators to manifest 3D objects, scenes, or even virtual worlds using only their imagination and language descriptions. This could dramatically expand 3D content creation for applications like gaming, CGI, VR/AR, and design.

Keep reading with a 7-day free trial

Subscribe to AIModels.fyi to keep reading this post and get 7 days of free access to the full post archives.