Getting a consistent character across image generation runs

Maintaining visual coherence of the same character across different generated images

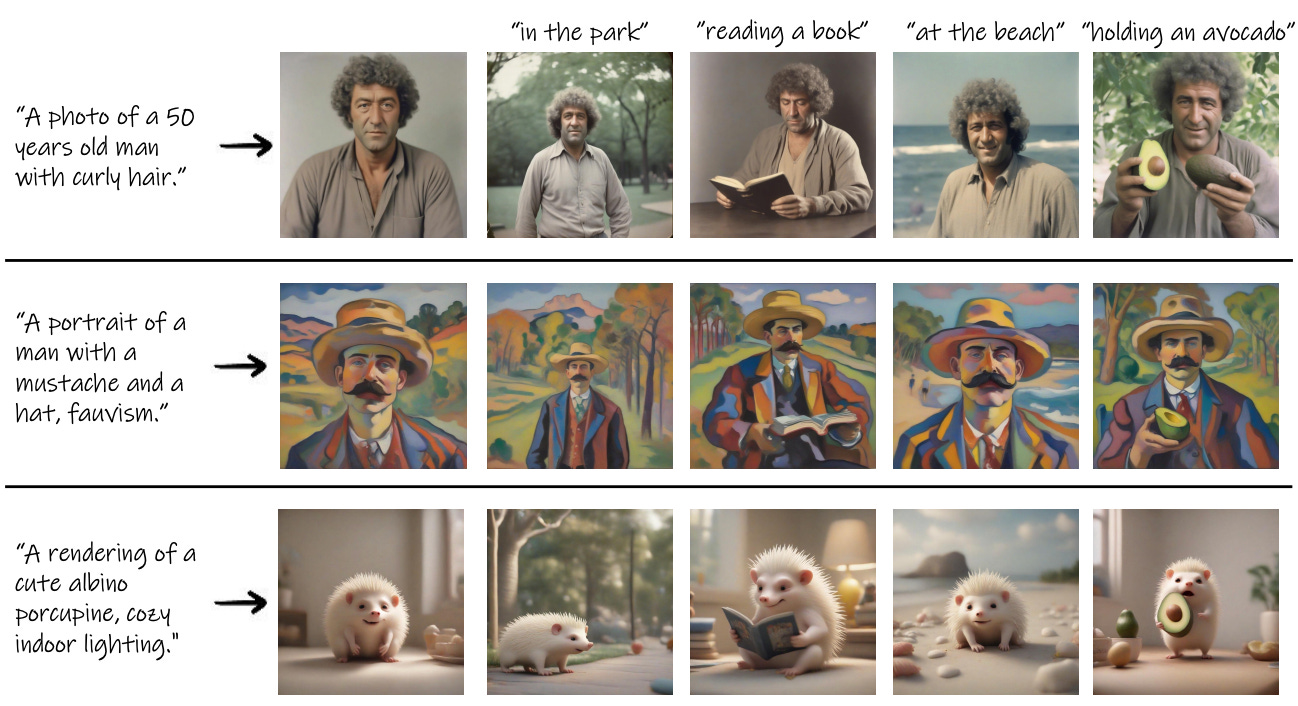

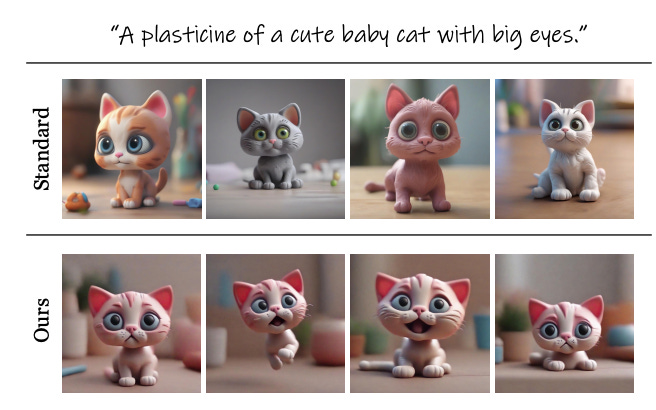

Recent breakthroughs in AI-generated imagery via text-to-image models like DALL-E and Stable Diffusion have unlocked new creative possibilities. However, a persistent challenge is maintaining visual coherence of the same character across different generated images.

Researchers from Google, Hebrew University of Jerusalem, Tel Aviv University, and Reichman University proposed an automated solution to enable consistent character generation in a paper titled "The Chosen One: Consistent Characters in Text-to-Image Diffusion Models."

Let's see what they uncovered and how we can use it in our own work!

Subscribe or follow me on Twitter for more content like this!

The Context

Text-to-image models like DALL-E and Stable Diffusion contain deep neural networks trained on vast datasets to generate realistic images from text captions. They optimize text embeddings and image latents to align the generated image with the text description.

However, these models struggle to maintain consistency across multiple images of the same character, even when given similar text prompts. For instance, asking the model to generate "a white cat" may produce cats with differing fur patterns, colors, poses, etc. in each image.

This inability to preserve identity coherence poses challenges for applications like:

Illustrating stories or textbooks with recurring characters

Building unique brand personalities and mascots

Designing video game assets and virtual characters

Creating advertising campaigns with memorable spokespersons

Without consistency, characters change appearance unpredictably across images. Creators often resort to manual techniques like depicting the character in multiple poses first or carefully hand-picking the best results.