Finally, a CLIP model you can focus wherever you want

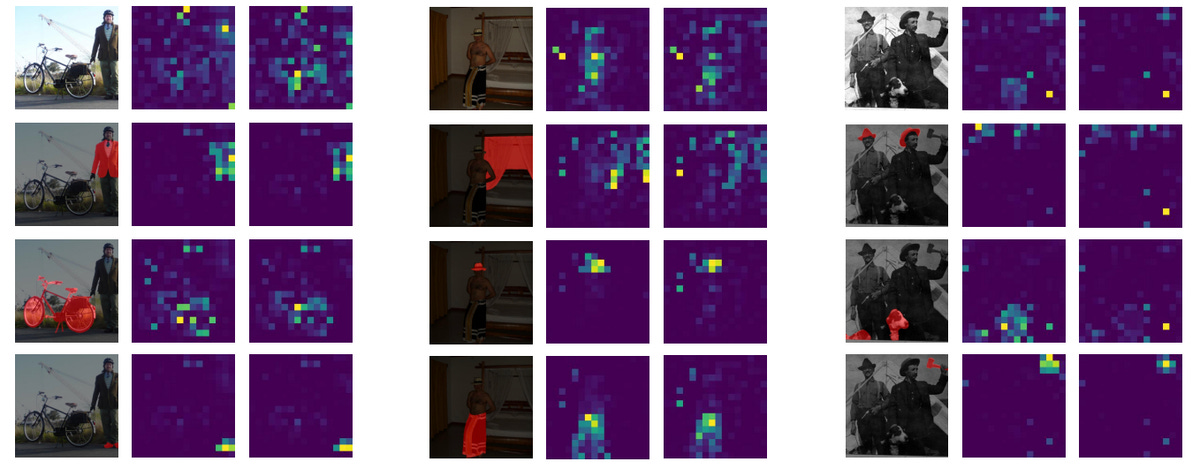

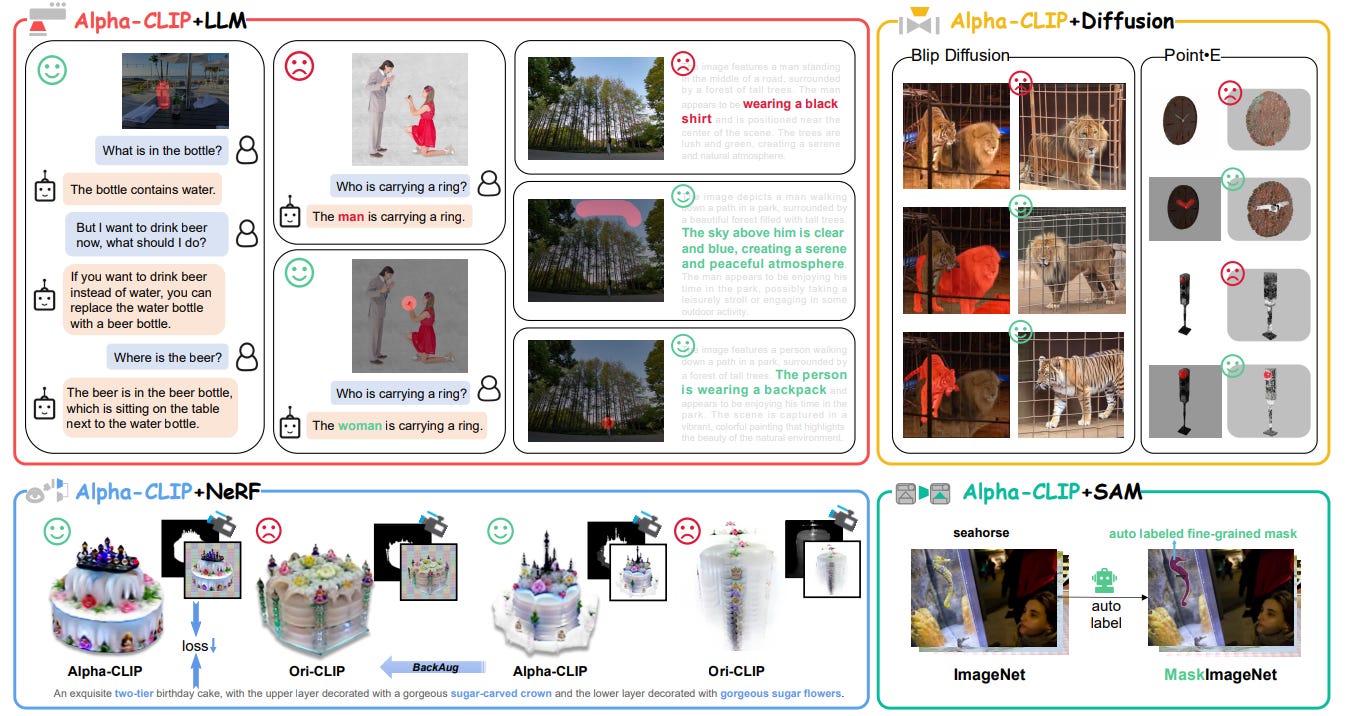

Adding an alpha channel to focus CLIP leads to better performance

Contrastive Language-Image Pretraining, or CLIP, is a method used in AI that involves training a system to understand and link images and text. This process is like teaching a computer to connect a picture with the words that describe it. CLIP aligns these image and text 'translations' (embeddings) in a shared virtual space where the computer can compare and relate them. The image part of CLIP translates the visual elements into embeddings that a computer can analyze, capturing meanings or concepts associated with the elements in an image. The text part understands language as related concepts. This approach allows the computer to understand images beyond just labels, encoding rich context for use in a wide range of applications, from computer vision to multimedia and even robotics.

In this post, we'll take a look at a paper that proposes a new implementation of CLIP called Alpha-CLIP. The researchers have built a tool that they claim performs better due to guided attention and can even been swapped in directly into applications that use the base CLIP model. Let's see what they came up with and why their approach works.

Keep reading with a 7-day free trial

Subscribe to AIModels.fyi to keep reading this post and get 7 days of free access to the full post archives.